At a Glance

- Companies can’t scale their AI solutions without also reshaping their technology function to enable this massive shift.

- Taking an “AI everywhere” approach to re-architecting the tech stack is a critical, foundational step.

- Equally important will be upgrading current ways of working to make the best use of new AI solutions, which will require bringing the discipline of software development to the adoption of AI models.

This article is part of Bain’s 2024 Technology Report.

Companies are moving beyond the experimentation phase of proofs of concept and minimum viable products, and beginning to scale up generative AI across the organization. As they do, CIOs will need to own, develop, and maintain production-grade AI solutions while efficiently delivering them at scale. At the same time, they will need to enhance their own function’s productivity with the generative AI tools they are deploying to the rest of the organization.

This will fundamentally reshape the technology function across architecture, operating models, talent, and funding approaches, in several important ways:

- re-architecting the entire tech stack with an “AI everywhere” approach, integrating machine learning (ML) and generative AI;

- upgrading ways of working to incorporate AI solution development across product management, software development, operations, and support processes;

- upskilling engineering teams to integrate, test, and scale AI systems to production grade, while using AI tools to boost engineering productivity;

- redefining the mix of tech spending to support AI investments and infrastructure run costs, capturing the efficiencies from AI in areas like software development and service management; and

- reviewing risk management and governance to successfully deploy and upgrade AI models.

While all five of these processes will reshape the technology function, the first two—architecture with AI everywhere and upgrading ways of working—are the critical foundations to get right first.

Architecture with AI everywhere

Generative AI will affect systems across the entire enterprise.

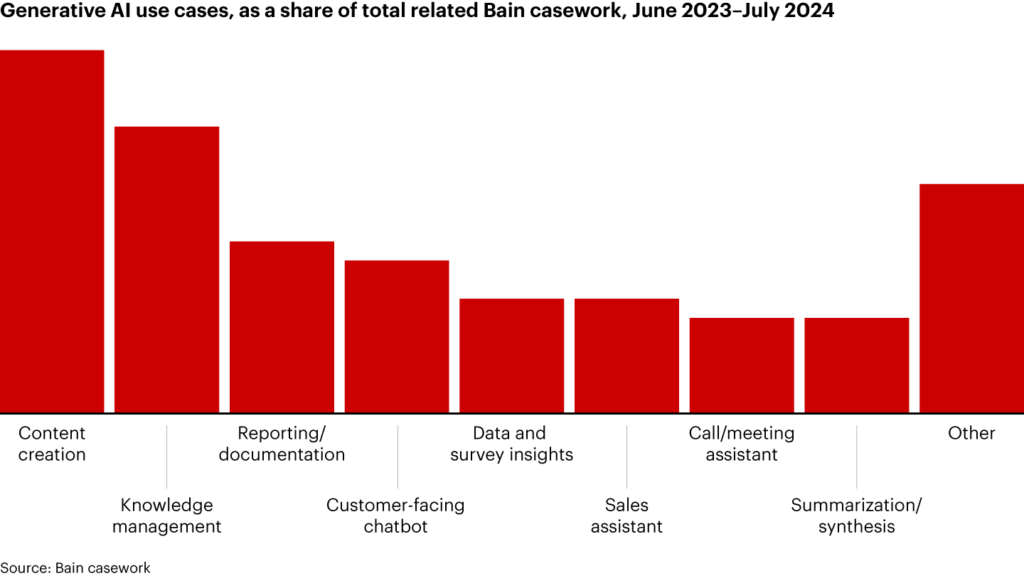

- Operational systems with significant unstructured data will face substantial re-architecting due to generative AI’s ability to make use of previously underutilized data sources. In our experience, the most common solution patterns for generative AI use cases in operational systems fall within the areas of content generation, knowledge management, and reporting and documentation (see Figure 1). CIOs and other tech buyers will need to decide between building or buying generative AI solutions for these uses, based on the potential competitive advantage and the cost and capability required. Currently, many companies are building or tailoring the solutions they need using foundation models because the necessary commercial solutions are not yet ready. Buying may become more practical and popular as existing software-as-a-service (SaaS) solutions incorporate generative AI.

Figure 1

Content creation, knowledge management, and reporting and documentation are among the most common applications of generative AI

- Integration, workflow, and orchestration systems will need to work seamlessly with AI models to enable more complex automation workflows. Additionally, generative AI accelerates the need for modernizing enterprise architecture, such as adopting API-driven integrations and cloud-first infrastructure, to deploy generative AI solutions more effectively. Over time, workflow and orchestration systems could be powered or replaced by agentic AI that can act semi-autonomously, as that capability matures.

- Data analytics and ML systems need to cover more unstructured data assets, as well as an AI as a service (AIaaS) platform and machine learning operations (MLOps) for reuse of common components and efficient deployment of new models. Data platform capabilities will need to be strengthened to incorporate more unstructured data sets (and treat them with the same discipline as structured ones), shared data catalogues, data versioning, and data lineage supported by data product teams. To enable use of approved models and common components (e.g., vector indexing or retrieval augmented generation) across use cases, an integrated AIaaS platform, rather than point solutions, needs to be created for each use case.

Upgraded ways of working

As generative AI model use cases get deployed across critical systems and complexity increases (for example, daisy-chained AI use cases), it will put further demands on collaboration, quality control, reliability, and scalability. AI models will need to be treated with the same discipline as software code by adopting MLOps processes that use DevOps to manage models through their life cycle.

Companies should set up a federated AI development model in line with the AIaaS platform. This should define the roles of teams that produce and consume AI services, as well as the processes for federated contribution and how datasets and models are to be shared.

Given the pace of evolution of generative AI, it is also imperative to create AI-first software development processes that allow for rapid iteration of new solutions and architectures. Agile teams need to factor in dependencies between applications, AI models, and data teams.

Software development and service management processes should also adopt generative AI tools, including coding assistants, knowledge management, and error detection. Clear guidelines are required on how to deploy these tools, regularly monitor their impact, and manage risks.

Many of these choices will need to be made in a landscape of rapidly evolving generative AI technologies, necessitating some no-regret moves now while maintaining flexibility to adapt. As a result, this topic will become a priority for CIOs, creating significant change in the function, far beyond what we have seen in recent years.

Used with permission from Bain & Company. The article originally appeared here.