Researchers from the University of Adelaide (Australia) advise that more caution should be exercised for the use of generative artificial intelligence (AI) in educational contexts. This comes after a new study highlights key differences between modern technology and important ancient philosophy in education.

While AI is being introduced in schools, including the trialling of a generative AI chatbot to support teachers across Australia, new research raises concerns regarding its limitations in thought provocation and its misalignment with deep, philosophical learning.

Large Language Models (LLMs) are a type of AI language service that has become popular in recent years, including tools like ChatGPT, Gemini, and CoPilot.

“Students are currently using LLMs for a range of applications; however, it is particularly popular as a tool for grammar and writing assistance,” says Dr Steven Stolz from the University of Adelaide’s School of Education, who conducted the research with Law and Classics honours student Ali Lucas Winterburn and Professor Edward Palmer.

“For teachers, some applications are being used to plan lessons, set homework tasks, conduct assessments, and so on. Somewhat ironically, despite the warnings, Australian schools appear to be heading down the path of using AI, indicating the relevance of this research.”

The study, published in the journal Educational Philosophy and Theory, suggests that LLMs are likely to continue facing issues in their ability to successfully pass on knowledge to students. This is according to Platonic epistemology – the study of knowledge and understanding based on the ideas of the ancient Greek philosopher Plato.

In simple terms, Platonic epistemology suggests that true knowledge involves understanding unchanging, perfect concepts or “forms” that exist beyond our physical world. According to Plato, what we perceive with our senses in the physical world is just a reflection or imitation of these perfect forms, and real knowledge comes from grasping these forms with our mind.

“In our research project, we concluded that, in addition to the well-known factual unreliability of LLMs, they are also unsuited for meeting the standards of Platonic epistemology,” Dr Stolz says.

“Notably, Plato requires knowledge to derive from insight into the forms and advocates for a specific teaching approach that draws this understanding from the student. LLMs have their own method of dealing with forms, which diverges from the Platonic model.”

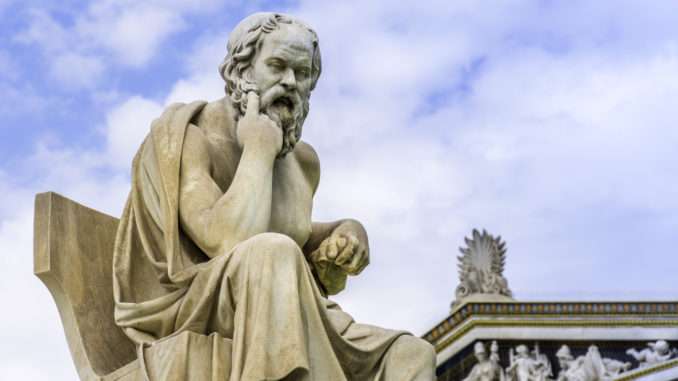

The research team was surprised to find that LLMs are particularly unsuitable for implementing the Socratic method, which involves teaching through open dialogue and thought-provoking questions.

“Plato requires the teacher to have a strong foundation of knowledge to perform the Socratic method properly,” Dr Stolz explains.

“Furthermore, this method relies on understanding the student’s thought processes and anticipating the course of their reasoning — something current LLMs struggle with.”

The research was conducted through reviewing and comparing strong philosophical understanding of ancient philosophy to AI literature. Dr Stolz says the research is novel and provides a new perspective on learning and teaching.

“We believe an investigation into LLMs from the perspective of Platonic epistemology is significant, given the recent proliferation of LLMs capable of accurately approximating human language,” Dr Stolz explains.

“Although there are many practical questions regarding LLMs, there are also important philosophical questions that arise concerning the epistemic capacity of these new non-human agents.

“Given the historical importance of Plato’s philosophy, we believe it is especially significant to evaluate LLMs within his epistemological framework. This framework has stood the test of time and should be given due consideration when it comes to learning and teaching.”

Dr Stolz raises concerns about the future of generative AI and suggests that more philosophical thinking and engagement with AI are required.

“We should be cautious about the use of generative AI in educational contexts. Greater thought needs to be given to its usage and what that looks like from an educational point of view,” Dr Stolz says.

“We shouldn’t lose sight of the fact that AI can lead to an outsourcing of our thinking. This is deeply problematic from an educational standpoint, as it diminishes an essential part of the educational enterprise: the cultivation of intelligent thinkers.

“We require more philosophical thinking and engagement with AI, particularly in relation to its application in educational contexts.”